Rachel Gates

Sound Designer, Audio Engineer, Composer

Hello!

I'm Rachel Gates, and I'm an upcoming graduate from Rensselaer Polytechnic Institute. I'll be completing my dual major in B.S. Music/Game Simulation Arts and Sciences (GSAS) this May. I specialize in audio engineering, sound design, music composition, and production. Here's a demo reel for some of my work:

Throughout university, I've honed my skills through both game and studio pipelines. I thrive when put in a group setting, and I'm not afraid to think outside of the box when it comes to my creative process (especially when making music or creating foley)! I love what I do, and resonate with transforming fiction into reality through the magic of sound.In my spare time, I am a bassist (both acoustic/electric) and percussionist who enjoys playing in various music groups ranging from jazz big bands, to small combos, to indie rock/alt. Whenever I'm not playing music or working in the studio, you can likely find me doodling, gardening, or tending to my indoor plants. :)

Overview

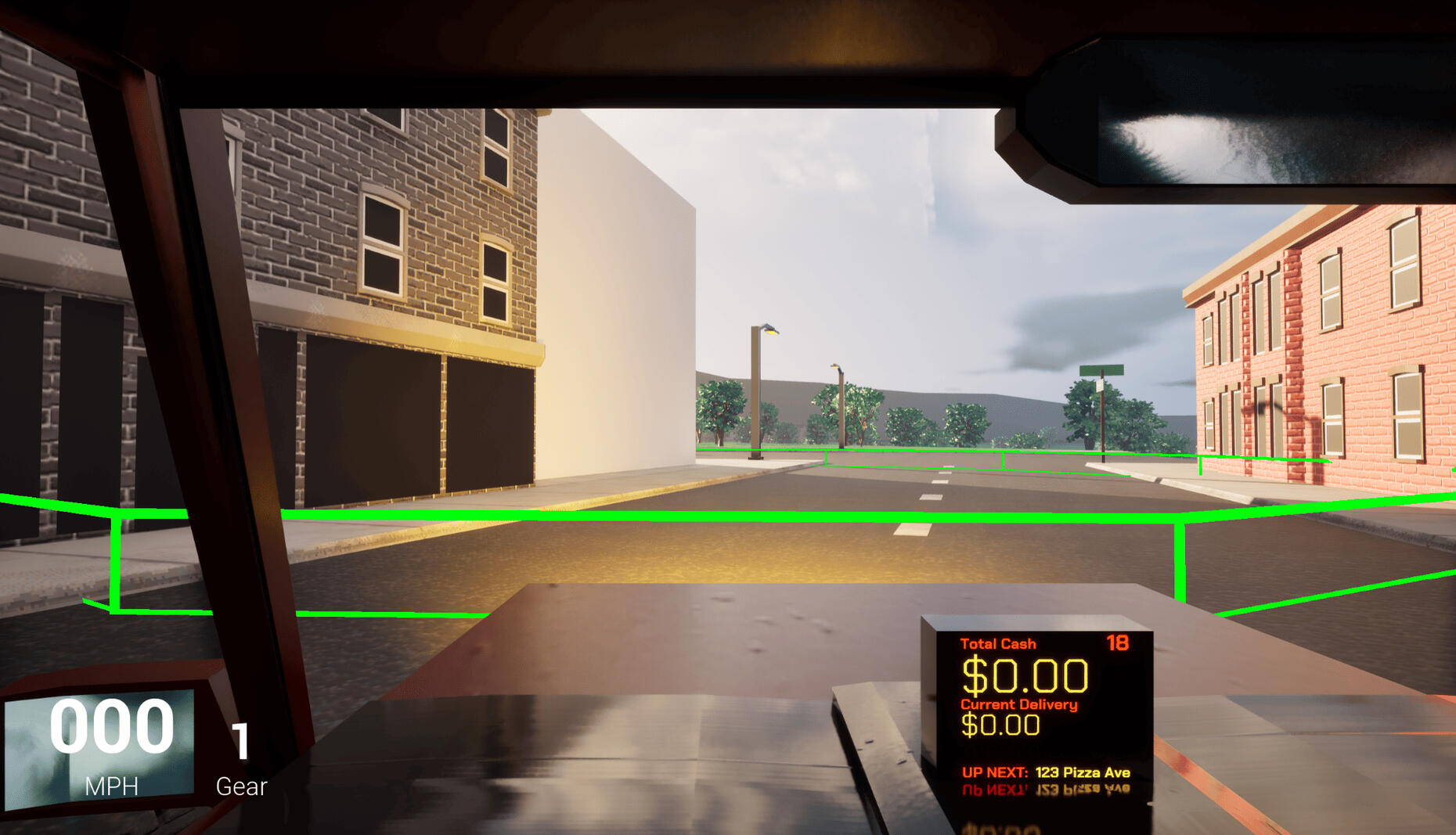

This game was created in Unreal Engine as a two-week project for Rensselaer Polytechnic Institute's experimental game design course. I created and implemented all music and sound for this project using Wwise, FL Studio, and Avid ProTools.Backseat Driver takes a creative spin on two-player gameplay, where players aim to deliver as many pizzas as possible within the time limit. However, the player who controls the car as the "driver" cannot see the screen. To find each address, Player two must guide them on the road while analyzing the map given to them on hand.

Overview

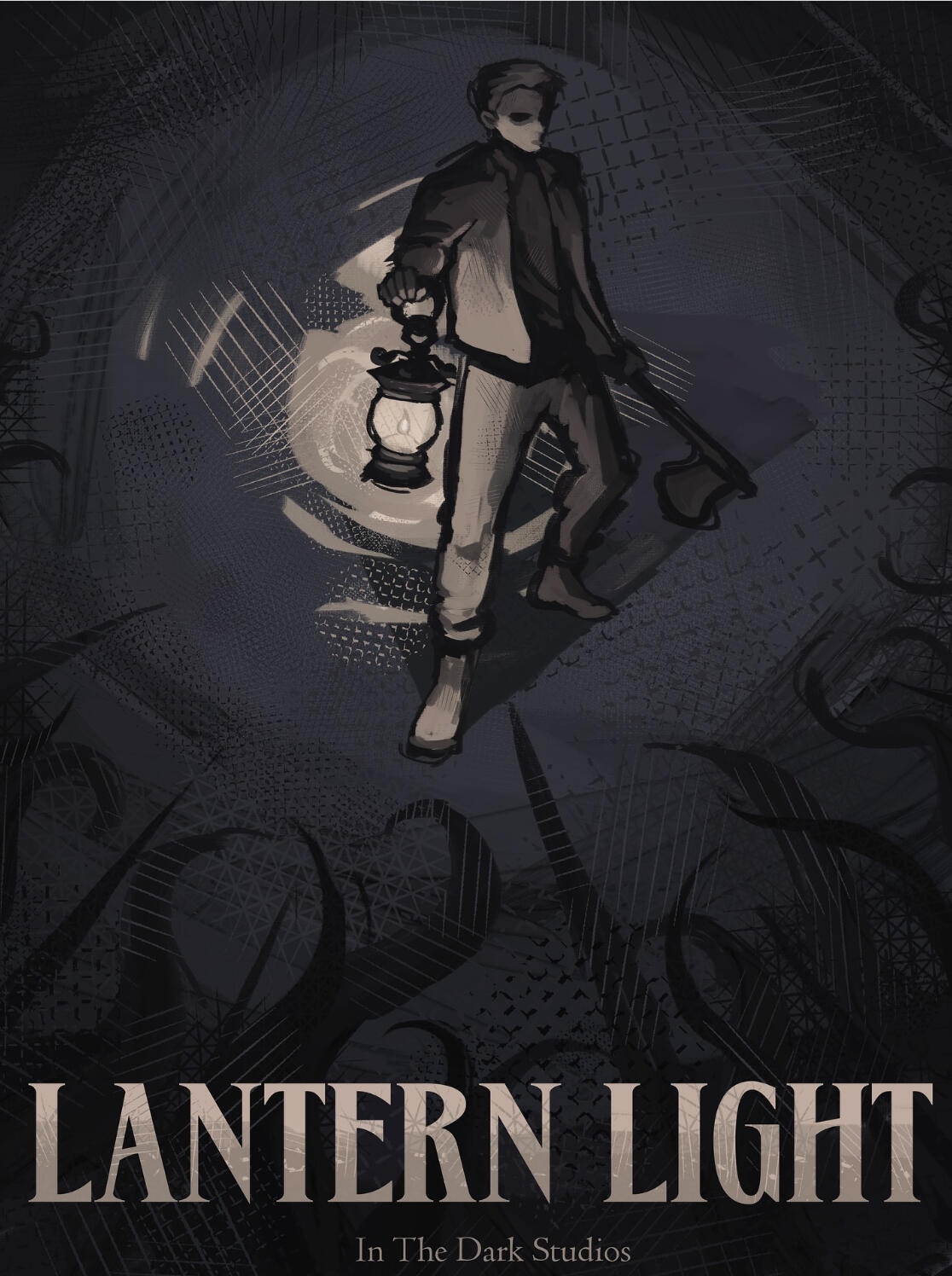

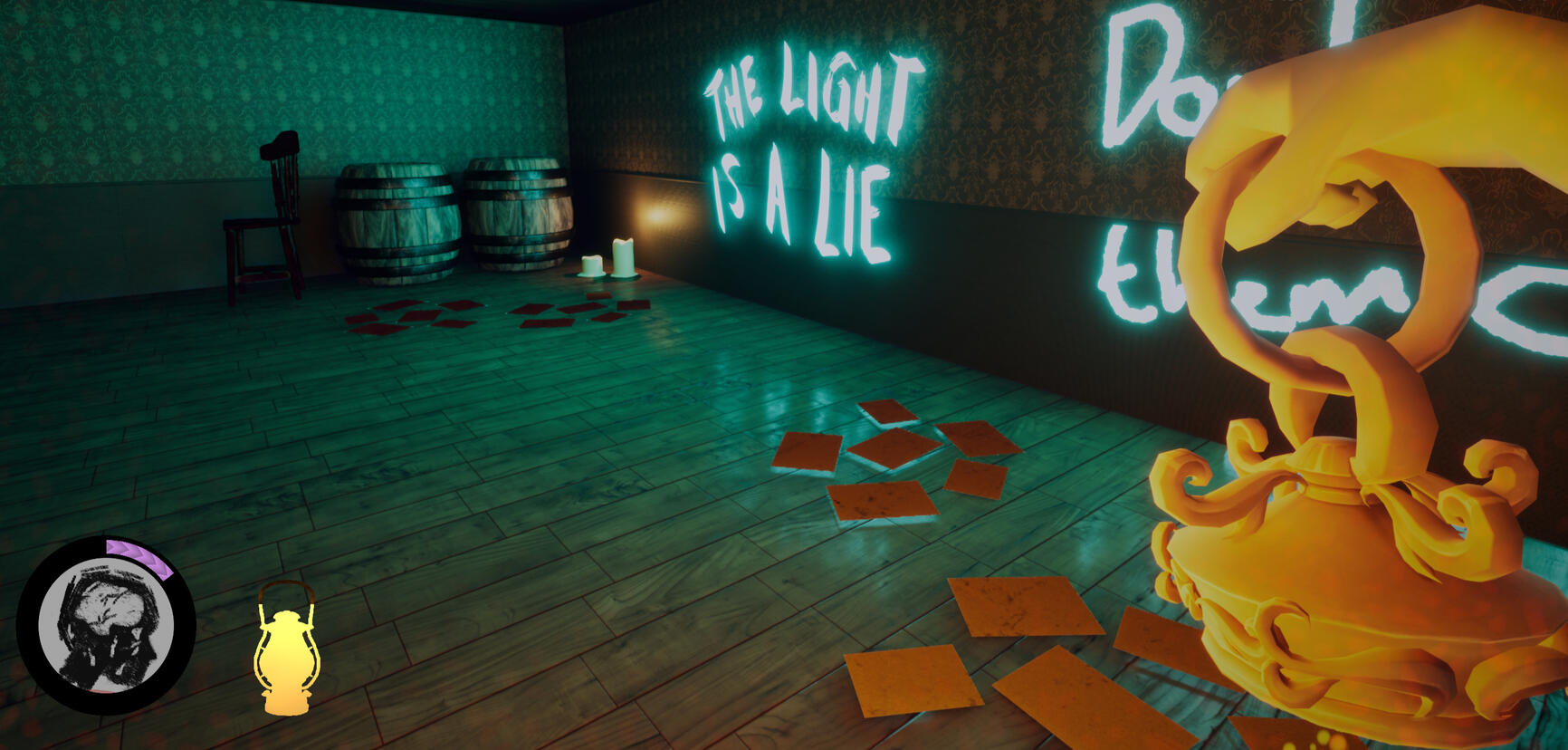

This game was created though Unreal Engine as a senior capstone project for Rensselaer Polytechnic Institute's game design program. Within my student-led indie dev team (In the Dark Studios) I was our Lead Sound Designer; this made me in charge of all sound design, foley recording, VA direction, and audio system implementation. All sound effects were recorded either using Avid Pro Tools or designed using FL Studio, with Wwise being used for programming and implementation.Lantern Light is one of the official games representing the NY State Pavilion at GDC 2026 in San Francisco.Synopsis: Lantern Light is a first-person action/horror/puzzle game set within a manor overcome by corruption and twisted monstrisities. Armed with only an axe and the fragile glow of your lantern, you must tread along the verge of madness while traversing through the dark. Use your resources wisely as you swap between combat and working through environmental puzzles. Keep the light alive, preserve your sanity, and confront your deepest fears to see what truly awaits for you in the darkness.

Music & Tech 2 Project Blog

Welcome to my blog! Here, I will keep updates on both research and progress on my Music & Tech 2 Project, as a part of the course from Rensselaer Polytechnic Institute.

Topics of Interest

Papers that align with my interests for this project:

In the first paper, "Chorale" Violon Harmonization: A Practical, Compositionally-based System for Generating Live Harmonies, Christpher Tignor goes into detail about his transducer-based harmonizer midi rig, which pairs with acoustic instruments (such as the violin used in the primary example, or anything that can have a transducer pickup placed on it. I've been very drawn towards the idea of incorporating some sort of midi mechanic to alter my performance while playing acoustic bass, especially in some sort of complementary accompaniment such as it is used here. I found this paper to be a good insight behind the processes that may be used to create this kind of acoustic-electric joint performance environment in which one complements the other. It also goes into detail what kind of physical setup configurations could be used in terms of controls, which in this case settled on the use of a midi pedal board.

The second paper, Exploiting Mimetic Theory for Instrument Design, delves even deeper behind which kinds physical and psychological design choices make an electronic instrument "believable", and others not so much. It centers its focus around the creation and design iteration processes of a guitar-like instrument, along with highlighting what makes association between gestures and musicians' movements in accordance to what the listener would "expect" to hear how an instrument would react. I pulled this article because I believe that intuitivity of design would be a very important role if I were to make some kind of physically interactive system that could be incorporated into the live playing of an actual instrument. Being able to include something that not only visually makes sense to the listener, but feel reasonable and uncumbersome to the musician when combined with their existent playing is something that would need intricate planning, in addition to multiple different trials to see what kind of interactive system would work best.

Lastly, in Guitar Augmentation For Percussive Fingerstyle: Combining Self-Reflexive Practice and User-Centred Design, the author delves into the concept of augmented guitar designs, specifically ones that complement the "percussive fingerstyle" technique. As I would like to try and incorporate my usual jazz pizzicato style on an upright bass into my project (which is an already physically interactive instrument), I notice that at times I subconciously make percussive accents rather than the intent one would have of producing a specific note on string contact. I could potentially incorporate this into a more intentional act of creating an interactive, percussive interface on my upright bass via mapping sensors or transducers that can detect the velocity of a note and output a desired accompaniment to what was intended.

Progress Update #1

Updated 3/14/26 (Proposal):

As of right now, I am doing further research to try and decide which program I want to utilize in order to create a functional prototype of my project. My potential candidates at the moment are Max MSP and Chuck, with the latter being something I have very little knowledge about. As for what I plan for my prototype to be exactly, I don't expect myself to be able to incorporate the hardware needed to connect my upright bass to a programming language for a fully-functional system. However, I'm curious if I would be able to emulate this system by connecting a microphone or a midi keyboard to a programming language instead.As for what I plan for my prototype to be, I will be making a program of some sort that can react to analog transducer inputs. The idea is that it will have the ability to detect either the velocity of a note/velocity of contact with an interface, and depending on that value, producing a note or sound which corresponds with that assigned number (most likely of a percussive manner, however, there's potential for me to play around with the actual signal itself). As of right now, I have already made a sample pack of various sounds from a live drum kit, which I would like to try and incoorporate and map into the playback of my prototype. I am now in the phase of trying to decide how exactly I plan to attach these sounds to select parameters, in addition to what the best program would be to base this system off of, especially if it's for the sake of having the ability to be used live. On one hand, I have more experience using Max MSP, but I am aware that Chuck has the potential to work as well. At the moment, I need to determine which program would work best for me, and if it's Chuck, I have the additional learning curve of learning a language that I am very unfamiliar with.I've linked below the drum sound sample pack that I've created for the purpose of this project, using raw recordings from a live drum kit. This was recorded using RPI's HASS Media Studio in DCC 174.

Updated 3/27/26 (Further Research/Planning):

Currently, I am attempting to learn how to use Chuck as an attempt to use it for this project! I've begun following basic video tutorials on its core concepts, and practicing via the miniAudicle. Even though the project I will be most closely referencing had its project based in MAX and Ableton Live, I figured it would be worth a shot to try to learn and adjust to a new programming tool, as I've take an interest in Chuck. The main challenge with this, will likely be learning how to integrate my MAX knowledge to try and determine how to translate that thinking into a new programming language. Currently, my skills are still very basic in Chuck, so I've been working on becoming more familiar with some of its syntax.As I become more familiar with chuck, I've been looking deeper into some of the sources that were written down in the paper I selected about Guitar Augmentation for Percussive Fingerstyle. In the prototype used in the basic testing phase, the author used a setup involving 3 piezo pickups threaded through high capacitance piezo preamps. High capacitance is a must in order for the detection to work properly, and Ideally I'd like to have piezos located on one beneath the fingerboard of the bass, one on the front of its body, and maybe one on the shoulder of the instrument. I've looked on amazon for some potential piezos I could use, and I haven't been able to find any direct setup involving a 3-way piezo pickup system. meaning, I'll likely have to make it myself. For this, before I made any purchases on piezos and preamps, I've begun a small side project of researching the ideal way to solder together a 3-piezo pickup system that would be suitable for this project. I've linked some papers that would potentially be useful in this search to this page, as I've saved them for reference for the future.Something I am considering for the future of this project as well, is the potential likelihood of using Wekinator to determine gestures between the things that I am playing on upright bass, especially as I aim to trigger certain samples depending on what I do. There will need to be a minimum threshold of my playing, as I don't want sounds to be triggered for every little gesture I do. Rather, I want my system to be able to pick up intentionally percussive noises, so I can add to my playing, rather than hinder it. I am currently looking into a Chuck/Wekinator combination of programs to replace the project's inspiration topic's use of Max/Ableton Live.

Overview

Da(ta) Bus was created in Unity as a submission for Wolfjam's 2024 Hackathon. I composed the music and edited the sound effects for this project using FL Studio and Avid ProTools.In this casual puzzle game, Connect the circuits to route Buster to his destination elsewhere on the motherboard. Be careful where you place your tiles; you may end up blocking Buster's path!

Overview

This game was created in Unity as a project for Rensselaer Polytechnic Institute's Game Development 2 course. I created and implemented all music and sound for this project using FMod, FL Studio, and Avid ProTools.Umbral Pursuit is a stealth based roguelike game where you play as Osk, the master of shadows. Reclaim what was once yours by sneaking around the Light Mage's mansion, morphing into shadows, and assassinating guards.

Experience

Rensselaer Music Association - Chief Audio Engineer

(2024-Present)

As the Chief Audio Engineer for the Rensselaer Music Association, I’ve played a role in both maintaining our student-run studio space, and training studio assistants on how to properly handle and utilize our studio gear. I’ve collaborated with over 8 student music groups in both recording sessions and live performances, and continue to maintain and run our studio schedule. Everything between handling studio bookings, equipment maintenance, choosing new gear, and the organization/cleaning of the space are within my domain.

Rensselaer Polytechnic Institute - Union Show Tech

(2024-Present)

As a part of the Student Union Showtech Audio Team, I help set up and run live sound systems at various events across campus. These events range from live music groups to student showcases, to guest performers visiting campus.

EMPAC - Assistant Audio Engineer

(2023-2025)

At EMPAC, I assisted with residential artists, exhibits, and performances through setting up audio equipment, recording events, and assisting front of house. I've also participated in past acoustic research, collecting various ambisonic recordings of our concert hall.